Use filter() to create a subset of mlbbat10 called nontrivial_players consisting of only those players with at least 10 at-bats and obp of below 0.500.Nevertheless, we can demonstrate how removing him will affect our model. While his performance was unusual, there is nothing to suggest that it is not a valid data point, nor is there a good reason to think that somehow we will learn more about Major League Baseball players by excluding him. The justification for removing Scales here is weak. Scales also walked seven times, resulting in his unusually high OBP. In the last sequence we demonstrated that these point estimates are unbiased. They provide point estimates of b 1and b 2, respectively. In regression analysis, the term standard error refers either to the square root of the reduced chi-squared statistic, or the standard error for a. In the mlbBat10 data, the outlier with an OBP of 0.550 is Bobby Scales, an infielder who had four hits in 13 at-bats for the Chicago Cubs. Standard errors of regression coefficients Simple regression model:Y b 1 + b 2 X+ u 1 We have seen that the regression coefficients and are random variables. The standard error is a measure of the amount of error. A desire to have a higher \(R^2\) is not a good enough reason! he STEYX function returns the standard error of the predicted y-value for each x in the regression. However, one must have strong justification for doing this. Sometimes, a better model fit can be achieved by simply removing outliers and re-fitting the model. Statisticians must always be careful-and more importantly, transparent-when dealing with outliers. Observations can be outliers for a number of different reasons.

CALCULATE STANDARD ERROR OF REGRESSION MOD

You can recover the residuals from mod with residuals(), and the degrees of freedom with df.residual().

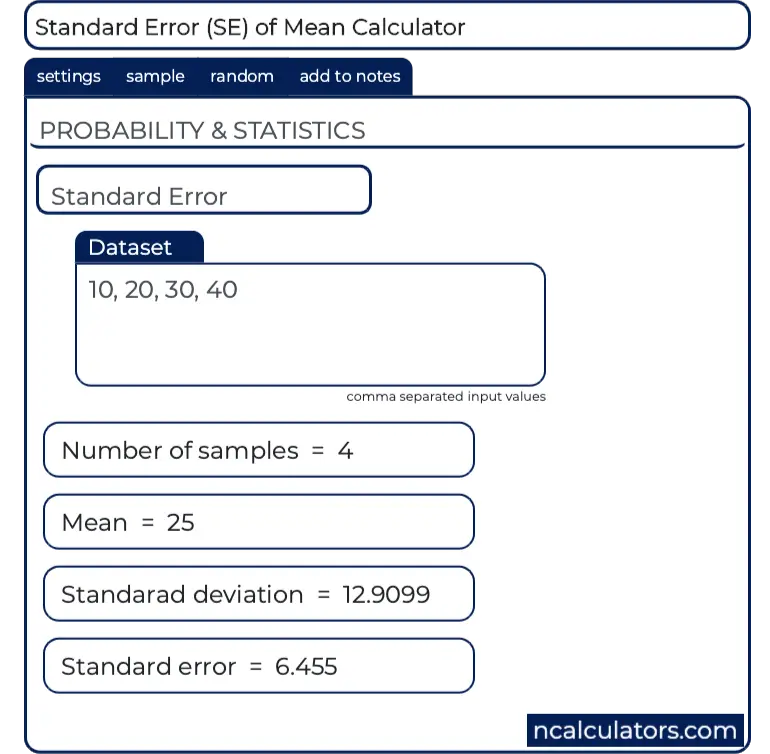

To make this estimate unbiased, you have to divide the sum of the squared residuals by the degrees of freedom in the model. R calls this quantity the residual standard error.

Thus, it makes more sense to compute the square root of the mean squared residual, or root mean squared error (RMSE). In fact, it is guaranteed by the least squares fitting procedure that the mean of the residuals is zero. However, recall that some of the residuals are positive, while others are negative. The magnitude of a typical residual can give us a sense of generally how close our estimates are. That is, for some observations, the fitted value will be very close to the actual value, while for others it will not. One way to assess strength of fit is to consider how far off the model is for a typical case.

5.6 Adding a regression line to a plot manually.“Regression” in the parlance of our time.

4.2.1 Regression model output terminology.4.2 Uniqueness of least squares regression line.3.3 Spurious correlation in random data.2.2 Boxplots as discretized/conditioned scatterplots.This is where the standard error in regression output comes from. So, from our one sample, we can compute an estimate of $\beta_1$ and an estimate of the standard error. In the example above, the coefficient would just be m (y2-y1) / (x2-x1)nd in this case, it would be close. \hat_1$ in answer is a good estimate of the true standard error, even though we're only calculating it from one sample. Substituting the values for y-intercept and slope we got from extending the regression line, we can formulate the equation - y 0.01x - 2.48 The formula y mx + b helps us calculate the mathematical equation of our regression line. OLS estimators of $\beta_1$ and $\beta_2$ are given by I know that ls.diag() calculates standard errors and t-tests for regression results, but it requires a very specific input format (i.e., the result of lsfit()), so I dont think I can use that. the standard regression coefficient for Color (cell. I have results from a regression analysis conducted with another program and I would like to test with R whether they are significant. We can now calculate the standardized regression coefficients and their standard errors, as shown in range E9:G11, using the above formulas. From $n$ observations, where $\epsilon_i$ are iid and of same variance $\sigma^2$. The ordinary regression coefficients and their standard errors, shown in range E3:G6, are copied from Figure 5 of Multiple Regression using Excel.